TL;DR:

- Securing agentic AI requires comprehensive oversight, governance, and entitlement controls beyond model hardening.

- Operational risks arise from agents reasoning and acting across multiple tools without continuous human approval, necessitating layered security measures.

Most managers assume that securing an agentic AI system comes down to picking a well-aligned model and hardening it against misuse. That assumption is dangerously incomplete. Model hardening alone is not enough; runtime governance, oversight, and entitlements are equally essential. Agentic AI introduces a new category of operational risk because these systems reason, plan, and act across multiple tools and data sources without waiting for human approval at every step. This guide walks through the foundational frameworks, real threat scenarios, measurable performance benchmarks, and step-by-step deployment practices you need to run agentic AI securely and reliably inside your organization.

Table of Contents

- What is secure agentic AI design?

- Common security threats and edge cases in agentic AI

- Benchmarking agentic AI in workflow automation: KPIs and ROI

- Best practices for secure agentic AI deployment in your organization

- Ailerons IT’s perspective: why ‘control’ is the true differentiator in agentic AI security

- Leverage Ailerons IT for secure agentic AI implementation

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Security is systemic | Securing agentic AI requires oversight, governance, and controlled autonomy, not just model hardening. |

| Anticipate unique threats | Edge cases like prompt injection and cascading failures need proactive design and mitigations. |

| Measure operational impact | Track KPIs such as completion rates, cost per task, and time to close to gauge agentic AI effectiveness. |

| Best practices matter | Progressive deployment, human-in-the-loop, and transparent monitoring are critical for success and safety. |

| Organizational control wins | Effective runtime governance and escalation pathways maximize reliability and ROI in agentic automation. |

What is secure agentic AI design?

Agentic AI refers to software systems that can pursue multi-step goals autonomously. Unlike a rule-based bot that executes a fixed script, an agentic system interprets context, selects tools, and adjusts its approach based on intermediate results. That flexibility is exactly what makes it powerful for operational workflows. It is also what makes security design fundamentally different from classic automation.

Secure agentic AI design means building the entire system, not just the model, to behave safely and predictably. Three core concepts anchor this work.

Entitlements define what an agent is permitted to access and act upon. Think of entitlements as the keys an employee is allowed to carry. Giving an agent unrestricted access to your CRM, ERP, and file storage simultaneously creates massive exposure if something goes wrong.

Defense-in-depth means layering security controls so that no single failure produces a catastrophic outcome. One layer might validate inputs; another monitors runtime behavior; a third enforces human approval for sensitive actions.

Bounded autonomy describes limiting how far an agent can act before requiring confirmation. A well-bounded agent completes routine tasks independently but stops and escalates when it encounters an action outside its defined scope.

Two frameworks are shaping how organizations approach trustworthy agentic systems today. The CoSAI Principles for Secure-by-Design Agentic Systems outline defense-in-depth as the baseline, with oversight, governance, and Zero Trust entitlements as non-negotiable requirements. The Safer Agentic AI Framework adds a Weighted Factors Analysis model, helping stakeholders score and prioritize safety requirements based on use case risk.

| Framework | Core method | Key requirement |

|---|---|---|

| CoSAI Principles | Defense-in-depth, Zero Trust | Oversight, bounded agents, governance |

| Safer Agentic AI | Weighted Factors Analysis | Stakeholder safety scoring |

| NIST AI RMF | Risk mapping, documentation | Accountability, transparency |

Key principles that follow from these frameworks include:

- Minimize agent privileges to the narrowest scope needed for each task

- Require explicit human approval for irreversible or high-value actions

- Log every agent decision with enough context to reconstruct what happened and why

- Design for failure by assuming agents will encounter unexpected inputs

- Apply decision logic in agentic AI that includes hard constraints, not just soft guidelines

These principles are not optional extras. They are the difference between a system that operates reliably under real-world conditions and one that introduces new operational and compliance risk.

Common security threats and edge cases in agentic AI

Understanding what secure agentic AI is, the next priority is to surface exactly where and how things can go wrong, often in ways traditional security playbooks do not anticipate.

Classic automation security concerns center on access control and data integrity. Agentic AI inherits all of those concerns and adds several new ones. The core issue is that an agent’s power to interpret context and select tools dynamically creates attack surfaces that do not exist in rule-based systems.

Here are the four most operationally significant threats:

-

Prompt injection: A malicious actor embeds instructions inside content the agent processes, such as an email, a document, or a web page. The agent reads this content as part of a legitimate task and inadvertently follows the embedded instructions. In an office workflow context, this could redirect a billing agent to approve a fraudulent payment.

-

Tool access escalation: An agent begins a task with limited permissions, then requests access to additional tools as the task evolves. Without hard limits on dynamic permission grants, the agent can accumulate access far beyond what was originally intended.

-

Tool shadowing: A compromised or misconfigured tool returns misleading outputs that cause the agent to take incorrect downstream actions. Because the agent trusts its tool results, a poisoned tool can steer the entire workflow off course.

-

Cascading failures in multi-agent systems: When multiple agents hand off tasks to one another, a failure or compromise in one agent can propagate through the chain. This is the agentic equivalent of a supply chain attack, and it is especially dangerous because each handoff point multiplies the exposure.

Edge case mitigations for these threats include least-privilege tool access, strict argument validation before any tool call executes, and explicit action confirmation steps for sensitive operations. Dynamic policy enforcement and real-time monitoring are also highlighted in current security research as essential for agentic systems facing sophisticated attackers.

| Threat | Risk level | Key mitigation |

|---|---|---|

| Prompt injection | High | Input sanitization, sandboxed execution |

| Tool access escalation | High | Hard permission caps, no dynamic grants |

| Tool shadowing | Medium | Tool output validation, audit logging |

| Cascading multi-agent failure | High | Quarantine logic, inter-agent trust limits |

| Memory poisoning | Medium | Isolated memory scopes, integrity checks |

The challenge of security in agentic systems is that a minor edge case in testing can become a systemic incident in production. A billing agent that mishandles one injected prompt in a low-volume test environment might process thousands of transactions before anyone notices an anomaly at scale.

Pro Tip: When testing agentic workflows, deliberately introduce adversarial inputs at every external data entry point, including inbound emails, uploaded documents, and API responses from third-party services. Do not limit red-teaming to the model layer alone.

Benchmarking agentic AI in workflow automation: KPIs and ROI

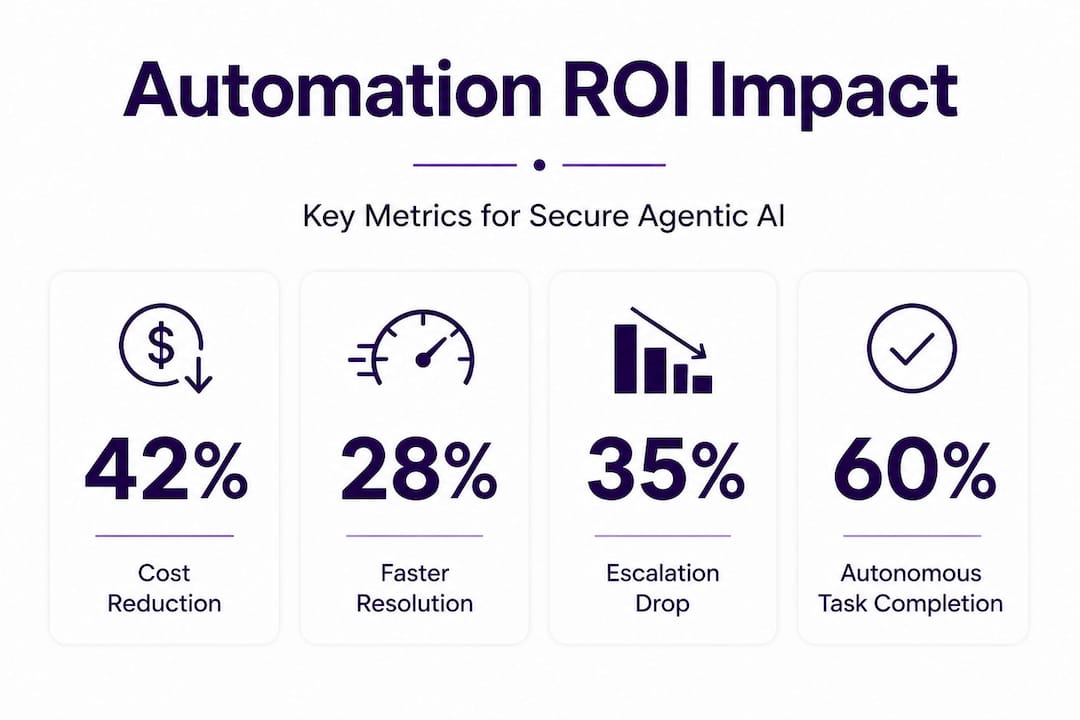

Having mapped out the key risks and mitigations, it is essential to see why secure agentic AI is worth getting right: measurable improvements in operational performance and ROI.

The business case for agentic AI is not theoretical. Organizations deploying these systems in production are tracking concrete KPIs that demonstrate real impact. The numbers vary by use case and industry, but the directional results are consistent.

Enterprise benchmarks show that agentic AI systems achieve task completion rates between 72% and 84%, depending on domain complexity. Escalation rates, meaning the share of tasks requiring human intervention, run between 15% and 25%. Mean time to close (MTTC) for resolved tasks drops from an average of 4.2 hours under manual workflows to as little as 8 to 15 minutes. Cost per successfully completed task lands in the range of $0.08 to $0.35, which compares favorably against manual labor costs in most operational settings.

KPI takeaways for operations and finance teams:

- Task completion rate is the primary health metric; values below 70% indicate either model limitations or poor workflow design

- Escalation rate above 30% signals that the agent’s decision boundaries are too narrow or its training data is insufficient for the use case

- MTTC improvement is the clearest efficiency gain to present to leadership; hours-to-minutes compression is compelling in IT ops, customer service, and compliance workflows

- Cost per task should be measured per successful outcome, not per attempt; a system with 90% accuracy but 50% completion rate is not delivering value

- Track error recovery time separately; systems that self-correct quickly are substantially more valuable than those requiring manual restart

Domain-tuned agents consistently outperform general-purpose models on cost-normalized performance benchmarks for enterprise tasks. This matters practically because a general model may achieve higher raw accuracy on a benchmark while delivering worse cost and reliability outcomes in a real workflow with unpredictable inputs.

| KPI | Manual baseline | Agentic AI range | Improvement |

|---|---|---|---|

| Task completion rate | 95% (human) | 72% to 84% | Autonomous, scalable |

| Mean time to close | 4.2 hours | 8 to 15 minutes | 94% reduction |

| Cost per task | Varies | $0.08 to $0.35 | Significant reduction |

| Escalation rate | N/A | 15% to 25% | Tracks oversight need |

The implication for AI in business process management is direct. Getting the security architecture right is not just a compliance exercise. A secure system is a reliable system, and a reliable system is the only kind that actually delivers on these performance numbers. Poor security design leads to errors, rollbacks, and escalations that eat into the very efficiency gains you are trying to capture. Agentic systems transforming work at the operational level require security and performance to be designed together, not sequentially.

Best practices for secure agentic AI deployment in your organization

To turn evidence and frameworks into results, let’s step through the recommended practices for running agentic AI securely in your workflows.

The key mistake organizations make is treating deployment as a one-time event. Secure agentic AI is an ongoing operational discipline, not a setup checklist. Here is a practical implementation sequence:

-

Define task scope and entitlements before deployment. Every agent should have a written specification of what tools it can access, what data it can read and write, and what actions require human confirmation. This document becomes the baseline for auditing.

-

Apply least privilege as a hard constraint. Do not grant agents access to systems they might need eventually. Start with the minimum required for the current task set. Expand entitlements only through a formal review process.

-

Implement progressive autonomy. Begin with a supervised mode where agents propose actions for human approval. Track accuracy and escalation rates over two to four weeks. Expand autonomous execution only to task categories that consistently meet your accuracy threshold.

-

Set up runtime monitoring and anomaly detection. Log every agent action, tool call, and decision point. Define normal behavior ranges for your KPIs. Trigger alerts when any metric falls outside acceptable bounds.

-

Build quarantine and escalation logic. Agents amplify mundane failures; multi-agent cascades require quarantine logic to contain failures before they propagate. Design your orchestration layer to isolate an agent that exhibits anomalous behavior and route its pending tasks to human review automatically.

-

Conduct regular red-team exercises. Test your agents against adversarial inputs quarterly. Include prompt injection attempts, tool output manipulation, and unexpected permission requests. Document findings and update your entitlement policies accordingly.

-

Maintain transparent telemetry. CoSAI guidelines specifically require transparent telemetry so that oversight teams can understand what agents are doing in real time, not just after incidents occur.

“Governance is not a gate you pass through before going live. It is the ongoing process that keeps agentic systems aligned with business intent as conditions change.”

Pro Tip: Build a separate escalation queue that captures every task your agent declined to complete autonomously. Review this queue weekly. It is the most reliable signal for identifying where your agent’s decision logic needs refinement and where your entitlement policies may be too restrictive or too permissive.

Process management with AI at scale requires this kind of structured feedback loop. Organizations that skip the review cycle tend to experience confidence gaps, where teams stop trusting the system because they cannot see inside it, and compliance gaps, where auditors cannot reconstruct what the agent did and why.

Ailerons IT’s perspective: why ‘control’ is the true differentiator in agentic AI security

Most conversations about agentic AI security focus on the model: which foundation model is most aligned, how thoroughly it has been fine-tuned, what guardrails are built in at the inference layer. Those are valid considerations. But in practice, they are not the primary driver of whether a deployment succeeds or fails.

What actually determines outcomes is operational control. Organizations that invest in governance structures, clear escalation paths, and real-time oversight consistently outperform those that prioritize model selection alone. This is not an argument against choosing good models. It is an argument for recognizing that control at the system and process level has a larger multiplier effect on reliability and safety.

Here is an analogy that holds up under scrutiny. A skilled employee with no defined authority structure, unclear escalation paths, and no performance feedback loop creates risk regardless of their individual competence. The same is true for an agentic system. The model is the employee; governance is the management structure. Both matter, but organizations that fix management problems by hiring better employees tend to repeat the same failures with each new hire.

The real differentiator for mid-sized organizations is adaptive control. Static policies that were correct at deployment gradually drift out of alignment as workflows evolve, new data sources are added, and business requirements shift. Fast feedback loops, regular entitlement reviews, and domain-specific governance are what keep a system aligned over time. This is what operationalizing agentic AI actually looks like in practice, not a launch event but a continuous discipline.

The organizations getting the best ROI from agentic AI are not necessarily those with the most advanced models. They are the ones that built the oversight infrastructure first and treated every anomaly as a governance signal rather than a technical glitch.

Leverage Ailerons IT for secure agentic AI implementation

If you’re ready to put these principles and practices into action, our team can help guide your organization from design to deployment. Ailerons.ai specializes in designing and operating secure agentic AI systems with governance structures built in from the start, covering entitlement design, runtime monitoring, escalation logic, and integration with your existing CRM, ERP, and document platforms. You can review real-world agentic AI case studies to see how organizations like yours have moved from manual workflows to reliable agentic automation. For tailored support, our AI and IT consulting team is available to assess your current environment and design a deployment roadmap that fits your operational risk tolerance and performance goals.

Frequently asked questions

What makes agentic AI different from classic automation?

Agentic AI operates autonomously, making contextual decisions and executing tasks with greater flexibility than rule-based automation. Agents complete up to 80% of workflow tasks through contextual adaptation rather than fixed scripts.

How do you measure the success of secure agentic AI deployments?

Track KPIs like task completion rate, cost per task, escalation rate, and mean time to close for each automation initiative. Enterprise benchmarks show task completion at 72% to 84%, cost per task at $0.08 to $0.35, and MTTC reductions that significantly outperform manual workflows.

What is the greatest security risk unique to agentic AI?

Prompt injection and dynamic access escalation are especially dangerous because they exploit the agent’s core reasoning capability rather than a software bug. Least privilege controls and real-time argument validation are the primary technical mitigations.

Can agentic AI reduce operational costs and improve ROI?

Yes. Secure agentic AI improves ROI by automating tasks at lower cost per completion and dramatically reducing resolution times. MTTC drops from an average of 4.2 hours to as little as 8 to 15 minutes for automated tasks, with cost per task well below manual workflow equivalents.

Is model hardening enough for secure agentic AI?

No. Runtime governance and entitlement design have a larger practical impact on security outcomes than model-level hardening alone. True security requires system-level design choices that extend well beyond the model itself.

Recommended

- Secure, Compliant AI Design: Building Trustworthy Agentic Systems | Ailerons IT Consulting

- Step-by-Step Guide to AI-Driven Office Automation Success | Ailerons IT Consulting

- How to harness decision logic in automation with agentic AI | Ailerons IT Consulting

- Improving Business Workflows with AI: Achieve Automation | Ailerons IT Consulting

- AI Use Case – Schlüsselrolle für sicheres VR-Training - Amlogy AR|VR