TL;DR:

- AI-first operating models position AI agents as primary operators, with humans supervising processes.

- Successful implementation requires outcome-driven redesign, precise data definition, and real-time ROI tracking.

- Mid-sized firms should start with high-impact, routine workflows like invoicing for effective AI integration.

Most business leaders believe they are making progress on AI by adding new tools to existing workflows. They install a chatbot here, automate a report there, and call it digital transformation. The problem is that approach rarely delivers the efficiency gains they expect. AI-first operating models do not layer intelligence on top of old structures. They rebuild the structure itself, placing AI agents at the center of operations and repositioning human teams as supervisors and decision-makers. This guide explains what that shift looks like and how to execute it in a mid-sized organization.

Table of Contents

- Defining the AI-first operating model

- Core principles and methodologies of AI-first design

- Why mid-sized firms should start with high-impact workflows

- How to measure success and sustain improvement

- The uncomfortable truth: AI-first isn’t about automation, it’s about reinvention

- Explore AI-first success stories and expert guidance

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI-first shifts core workflows | AI agents operate at the heart of business processes, while humans supervise rather than execute tasks. |

| Redesign, don’t bolt on | Layering AI atop outdated processes leads to failure; start with outcome-driven workflow redesign. |

| Measure adoption and trust | Track both technical and cultural indicators like AI usage, employee confidence, and ongoing ROI. |

| Prioritize high-impact tasks | Begin with administrative areas where automation delivers clear, measurable benefits and rapid wins. |

| Commit to iterative improvement | Continuous feedback and supervision are essential for optimizing AI-first operating models over time. |

Defining the AI-first operating model

The term “AI-first” gets used loosely, but it has a precise meaning in an operational context. An AI-first operating model redesigns organizational structure, processes, and workflows around AI agents as primary operators, with humans in supervisory roles, rather than layering AI onto human-centric processes. That distinction matters more than it sounds.

In a traditional operating model, a human employee owns a workflow from start to finish. AI might assist by flagging anomalies or generating a draft, but the human still drives every step. In a bolt-on AI model, the same human-centric process stays intact, but AI tools are inserted at specific points to speed up individual tasks. Neither approach changes how work is structured at a fundamental level.

An AI-first model flips that logic. The AI agent is the primary operator. It receives inputs, applies decision logic, executes steps across connected systems, and routes exceptions to a human only when the situation falls outside defined parameters. The human role shifts from doing the work to setting the rules, reviewing exceptions, and refining the system over time.

Key differences at a glance:

| Feature | Traditional model | Bolt-on AI model | AI-first model |

|---|---|---|---|

| Primary operator | Human | Human | AI agent |

| Human role | Executor | Executor with AI assist | Supervisor and exception handler |

| Scalability | Limited by headcount | Marginally improved | Scales without proportional staffing |

| Process design | Human-centric | Human-centric with tools | AI-centric with human oversight |

| Error handling | Manual review | Partial automation | Automated with escalation logic |

The benefits of this structure are significant. Scalability improves because AI agents can handle volume increases without additional hiring. Consistency improves because agents follow defined logic without fatigue or variation. Adaptability improves because agents can be updated with new rules or connected to new data sources faster than retraining a human team.

Key characteristics of a well-designed AI-first model include:

- Goal orientation: Agents are given objectives, not just task lists. They determine how to reach the goal within defined constraints.

- Context awareness: Agents pull relevant data from connected systems before acting, rather than operating on isolated inputs.

- Decision logic: Agents apply conditional rules and escalate when conditions are not met.

- Human integration: Humans remain in the loop for exceptions, approvals, and ongoing refinement.

For a broader view of how this connects to operational strategy, the AI-driven operations guide provides useful context on building AI-centered workflows from the ground up. You can also review AI process management strategies for a closer look at how these models apply across different business functions.

Core principles and methodologies of AI-first design

Now that the model is defined, it is crucial to know which foundational principles make it work in the real world. Defining the model is one thing. Building it correctly is another.

The methodologies behind AI-first design involve outcome-driven redesign, adaptive loops with simulation, testing and refinement, clear data definitions, production expertise in prototyping, and real-time ROI tracking. Each of these principles serves a specific function in keeping the model reliable and improving over time.

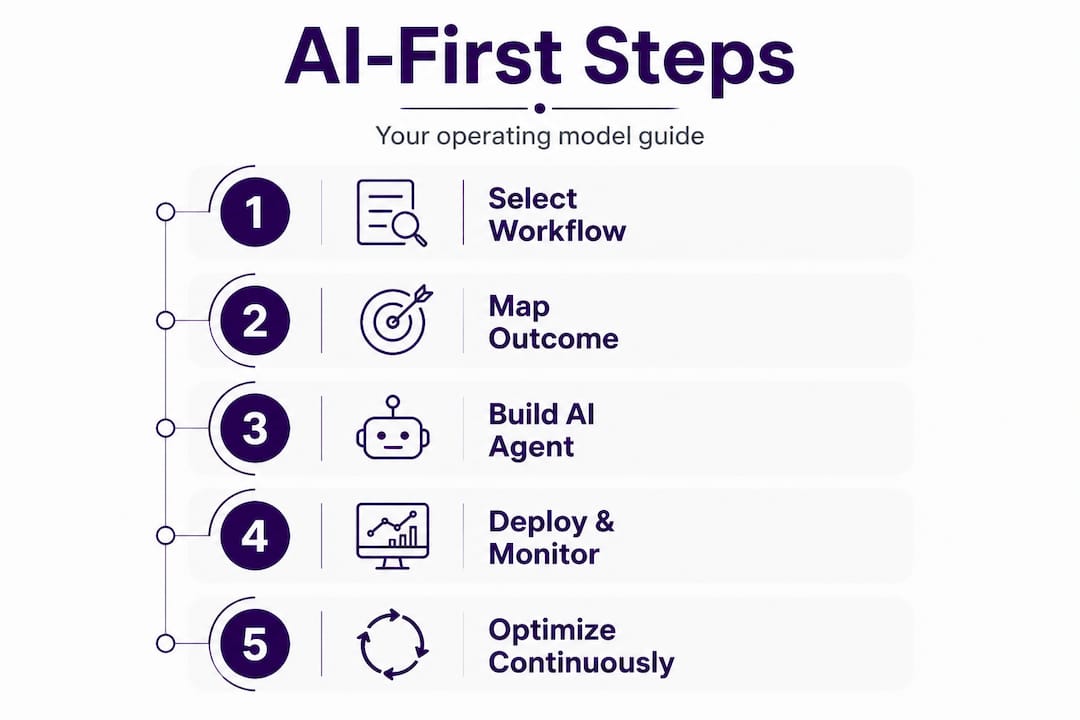

The five core principles, in order of implementation:

-

Think outcome-first. Start by asking: “If we had unlimited intelligence and information, what would this process look like?” This removes the constraint of current limitations and forces teams to design toward the ideal state, not an incremental improvement of the current one.

-

Define inputs and outputs precisely. Every AI agent needs clear data definitions. What information does it receive? What decision does it make? What output does it produce? Vague definitions lead to unpredictable behavior and erode trust in the system.

-

Build adaptive loops. AI-first systems are not set-and-forget. They require structured cycles of testing, simulation, and refinement. Each loop should produce measurable learning that feeds back into the model’s behavior.

-

Develop production-ready prototyping expertise. Moving from a working prototype to a production-grade system is where many organizations stall. Teams need the technical skill and organizational support to close that gap quickly, because a prototype that never reaches production delivers no value.

-

Track ROI in real time. Unlike traditional projects with quarterly reviews, AI-first implementations require continuous measurement. If a workflow is not performing, the feedback loop should surface that within days, not months.

Methodologies by workflow stage:

| Stage | Method | Goal |

|---|---|---|

| Design | Outcome-first mapping | Define the ideal end state |

| Build | Precise data definition | Ensure agent reliability |

| Test | Adaptive simulation loops | Identify failure points early |

| Deploy | Production prototyping | Move from concept to operation |

| Measure | Real-time ROI tracking | Sustain and improve performance |

Pro Tip: Before writing any technical requirements for an AI agent, write a one-paragraph description of the ideal outcome as if the system already exists and works perfectly. This keeps design decisions anchored to value rather than technical convenience.

For practical steps on improving existing workflows before introducing AI agents, the AI workflow improvement guide covers the groundwork that makes AI-first design more effective. If you are looking at office-specific rollouts, the AI office automation steps outline a structured approach to phased implementation.

Why mid-sized firms should start with high-impact workflows

With the framework clear, let us focus on how to tactically roll out these changes for maximum effect. Mid-sized companies face a specific challenge: they have enough operational complexity to benefit from AI-first design, but not always the resources to transform everything at once. The answer is selective, sequenced deployment.

For mid-sized firms, the recommended approach is to start with high-impact workflows such as administrative tasks, build context and tool layers first, implement supervision and feedback to reduce overhead, measure adoption, trust, and ROI dynamically, and avoid bolt-on AI to prevent failure cascades. Each of these points reflects a hard lesson learned from organizations that moved too fast or too broadly.

What makes a workflow a good starting point?

- It has measurable administrative overhead. You can quantify how many hours per week it consumes.

- It involves routine decision points. The decisions follow patterns that can be codified into agent logic.

- Reliable data already exists. The agent has clean inputs to work with from day one.

- Errors are recoverable. If the agent makes a mistake, a human can catch and correct it without serious consequences.

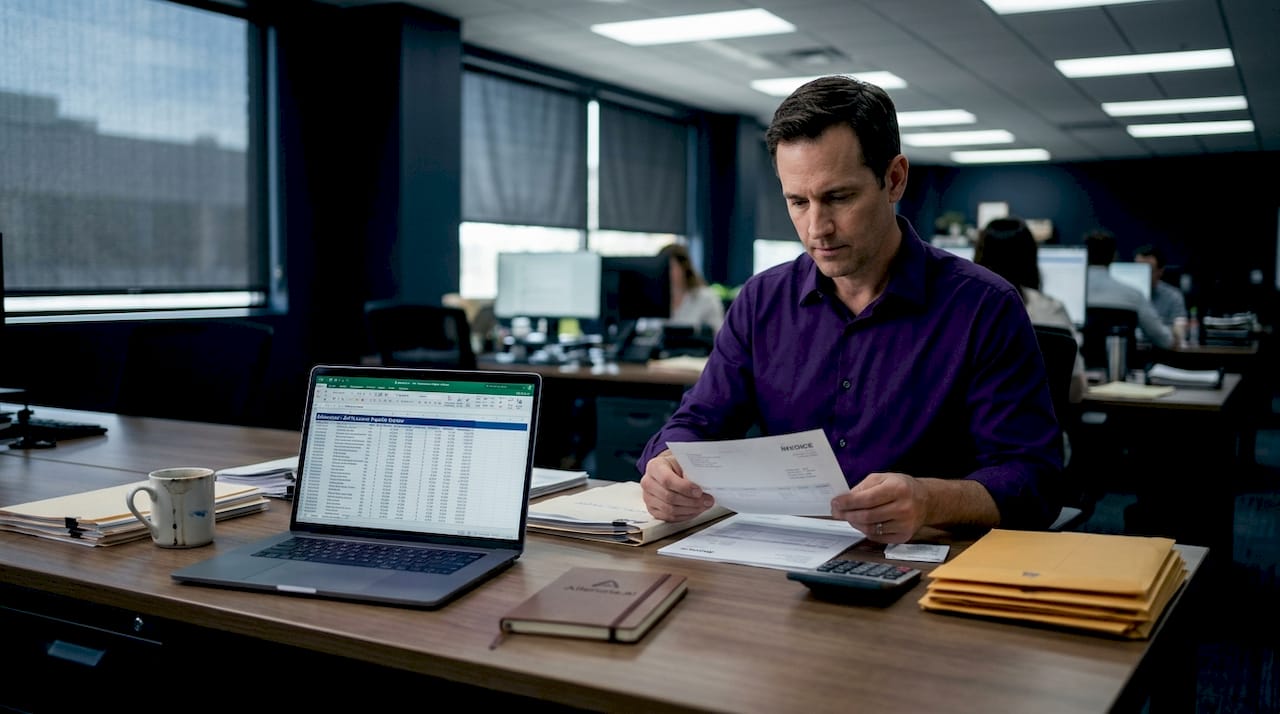

A practical example: invoice processing. In most mid-sized companies, accounts payable involves receiving invoices, matching them to purchase orders, flagging discrepancies, routing for approval, and logging the payment. Each step follows defined rules. The data exists in accounting and ERP systems. Errors are visible and correctable. This is exactly the kind of workflow where an AI agent can take over primary execution while a human reviews exceptions.

Contrast that with a workflow like strategic vendor negotiation. The decisions are contextual, relationship-dependent, and high-stakes. That is not a good early candidate for AI-first redesign, even if it consumes significant management time.

What to avoid:

- Installing AI plugins on top of a broken process. If the process has inconsistent data or unclear rules, the AI agent will amplify those problems, not fix them.

- Skipping the supervision phase. Even well-designed agents need a period of human oversight before trust is established. Rushing to full automation increases the risk of undetected errors compounding over time.

- Measuring success too early. Give the system enough cycles to stabilize before drawing conclusions about performance.

Pro Tip: Map your top ten administrative workflows by weekly hours consumed and decision complexity. The ones in the high-hours, low-complexity quadrant are your best starting candidates for AI-first redesign.

For more targeted guidance on identifying the right starting points, AI operational efficiency tips covers workflow selection in practical terms. The AI workflow decision logic resource explains how to structure the conditional rules that agents use to make reliable decisions.

How to measure success and sustain improvement

Once workflows are selected and deployed, the final step is knowing how to measure and enhance ongoing success. Without clear metrics, AI-first initiatives drift. Leaders lose confidence, teams revert to manual processes, and the investment stalls.

Outcome-driven redesign requires adaptive loops, clear data definitions, production expertise, and real-time ROI tracking. That last element, real-time ROI tracking, is what separates sustainable AI-first programs from one-time projects.

Four metrics every AI-first program should track:

-

AI adoption rate. What percentage of eligible workflow steps are currently handled by AI agents rather than humans? A rising adoption rate indicates that the system is earning trust and expanding its operational footprint. Stagnant adoption often signals a training gap or a trust issue that needs to be addressed directly.

-

Employee trust and satisfaction. This is measured through regular surveys and usage data. If employees are overriding agent decisions frequently, that is a signal. It might mean the agent logic needs refinement, or it might mean employees need more exposure to how the system works. Either way, it is actionable information.

-

Error rate reduction. Compare the error rate of AI-handled steps against the historical error rate for the same steps when handled manually. This is one of the most persuasive metrics for leadership, because it ties directly to cost and compliance risk.

-

Time saved per workflow cycle. Track the average time from workflow initiation to completion before and after AI-first implementation. Even a 30% reduction in cycle time across ten workflows produces a measurable impact on operational capacity.

Statistic to note: Organizations that implement structured feedback loops and real-time performance tracking in their AI deployments are significantly more likely to sustain efficiency gains beyond the first year, compared to those that measure only at project milestones.

The measurement framework should be reviewed monthly in the first six months, then quarterly once the system stabilizes. Each review should produce at least one specific adjustment, whether to agent logic, data inputs, or human oversight protocols.

For a broader view of where AI measurement practices are heading, AI trends for office operations covers the metrics and tools gaining traction in 2026. If you want to benchmark your current setup against proven implementations, top AI solutions for offices provides a practical comparison.

The uncomfortable truth: AI-first isn’t about automation, it’s about reinvention

Here is what most articles on this topic will not tell you. The organizations that fail at AI-first transformation do not fail because the technology does not work. They fail because leadership treats it as a technical upgrade rather than an organizational redesign.

The pattern is consistent. A company identifies a promising workflow, deploys an AI agent, sees some initial gains, and then hits a ceiling. The ceiling is not a technical limitation. It is the legacy thinking embedded in the process itself. The agent is fast and consistent, but it is executing a process that was designed for human limitations, not AI capabilities. The gains plateau because the underlying structure was never rebuilt.

The leaders who break through that ceiling do something uncomfortable. They start with a blank slate. They ask what the process would look like if it were designed from scratch today, with AI agents as the primary operators and humans as supervisors. That question produces very different answers than “how do we automate what we already do?”

This is also a cultural commitment, not just a technical one. Teams that have built their professional identity around executing workflows will need to rebuild that identity around supervising and improving AI-driven systems. That transition takes time, clear communication, and visible leadership support. Without it, even well-designed AI agents get quietly bypassed by employees who prefer the familiar.

The process automation tutorial on agentic AI and compliance offers a concrete example of how this reinvention plays out in a high-stakes operational context, where the cost of getting it wrong is visible and the benefit of getting it right is measurable.

The bottom line: AI-first is not a shortcut to efficiency. It is a commitment to rebuilding how work gets done. Leaders who treat it as anything less will get incremental results. Leaders who treat it as a structural redesign will get transformational ones.

Explore AI-first success stories and expert guidance

If you are working through how to apply these principles in your own organization, seeing how other mid-sized companies have navigated the transition is one of the fastest ways to accelerate your thinking. The AI-first case studies at Ailerons.ai document real implementations across administrative, billing, compliance, and document management workflows, showing what worked, what required adjustment, and what the measurable outcomes looked like. For organizations that want structured support in designing and deploying an AI-first model, Ailerons.ai provides consulting and implementation services built around agentic AI architecture. The goal is to move from concept to operational deployment with a clear plan and measurable targets from the start.

Frequently asked questions

What is the main difference between AI-first and traditional operating models?

AI-first models put AI agents at the core of workflows, with humans supervising rather than executing, while traditional models add AI on top of existing human-centric processes without changing the underlying structure.

How can mid-sized companies safely begin an AI-first transformation?

Start by redesigning high-impact administrative workflows with measurable overhead, build supervisory feedback loops from day one, and track adoption, trust, and ROI in real time rather than waiting for quarterly reviews to assess performance.

What metrics should be tracked to prove AI-first model success?

Key metrics include AI adoption rate across eligible workflows, employee trust scores, error rate reduction compared to manual baselines, and total time saved per workflow cycle, all reviewed on a consistent schedule.

What is one common mistake companies make when implementing AI?

Adding AI tools on top of legacy processes without redesigning the underlying structure causes failure cascades, because the agent amplifies existing inconsistencies rather than resolving them.

Recommended

- AI-driven operations guide: boost efficiency 72% in 2026 | Ailerons IT Consulting

- 6 actionable tips for AI-driven operational efficiency | Ailerons IT Consulting

- Step-by-Step Guide to AI-Driven Office Automation Success | Ailerons IT Consulting

- AI decision logic for smarter business workflows in 2026 | Ailerons IT Consulting

- AI in business: boost efficiency and profit in 2026